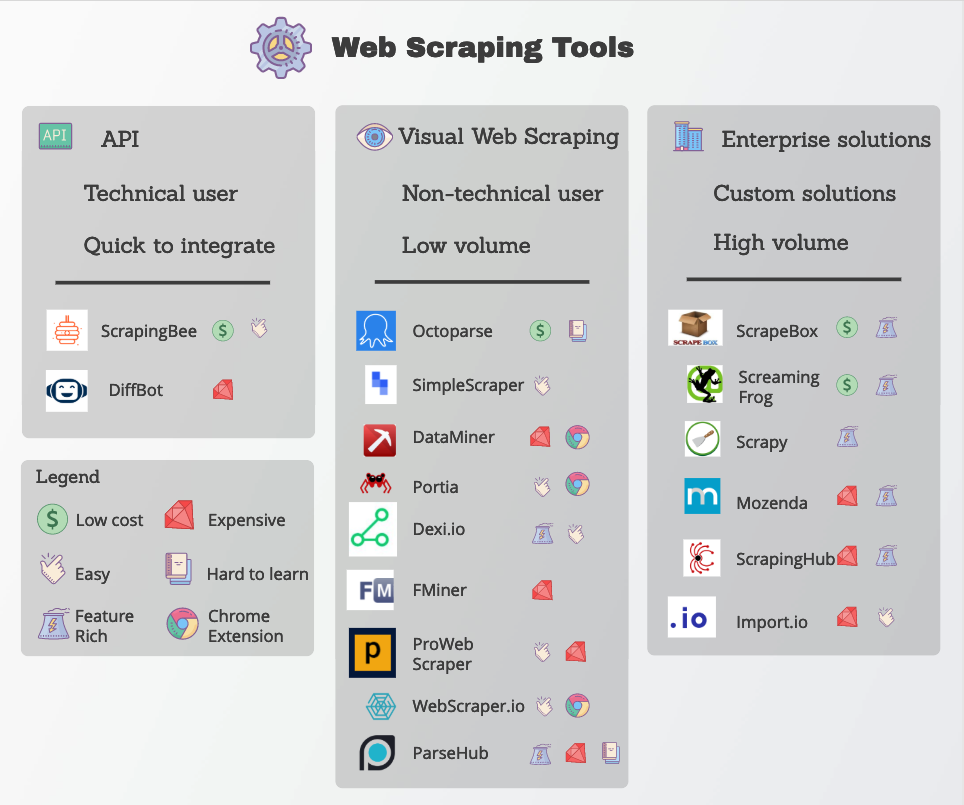

This way, they can prevent unfamiliar bots or spiders from crawling or scraping their website. They will then add this IP address to a temporary or permanent block list. Sometimes, when a website notices that an unfamiliar bot or spider is crawling their website, they will note the IP address they are coming from. Why Web Scrapers get Blocked by Websitesįirst, we have to understand the issue at hand. #OCTOPARSE WEB SCRAPING HOW TO#Here’s how to get around website blocks while web scraping. While this can be very frustrating, the fix is quite easy. Your web scraper is being blocked by the website you want to extract data from. You’ve found the data you want to scrape and set up your scraper to extract it.īut there’s a problem. Web scraping is just another form of data collection.So, you’ve put together your next web scraping project. As long as the gathered information is applied to improve user experience and not to spam or sell something, you are okay.Īll sites collect data in some way. Web scraping is legal, as long as the information gathered does not compromise the user itself (and often, does not individually identify the user). Whenever a user visits a website or opens a link, acceptance of the individual website’s privacy policy is assumed – and if you read it more carefully, you will notice that data collection (including cookies) gets mentioned first. Just the same way, websites can scrape information from uploaded data or comments or publically accessible data to improve UX. Now, search engines scrape a metric shit tonne of data (technical term) to put together their search results. Web scraping is the collecting of information from websites and the internet as a whole. Sidenote: What is web scraping & is it legal?īefore you dive in and start scraping left, right and centre, there are some things you should know first. It can handle extracting the data from web pages, scraping the SERPs and extracting contact information. Scraping Bee is a scraping API that rotates proxies and handles headless browsing for you.

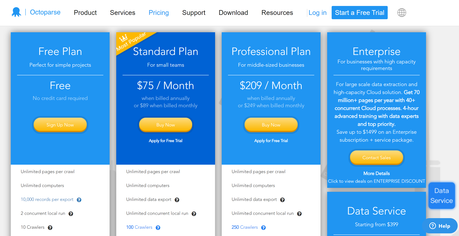

It can deal with all types of websites (infinite scroll, login, drop-downs, AJAX etc) and you can schedule tasks at specific times. Octoparse is a great web scraping API to automate data extraction from websites with just a few clicks and without coding. Scraper API is a cloud-based web scraping API that handles proxy rotation, browsers, and CAPTCHAs so you can scrape any page with just a single API call. It It is collected by their servers and then results can be downloaded via JSON, Excel or API. #OCTOPARSE WEB SCRAPING FREE#Parse Hub is a free web scraping tool that, in their own words, allows you to turn any site into a spreadsheet or API, and easily extract the data you need.ĭata can be scraped from data from multiple pages.

All extracted data is stored in a dataset, and can be exported in formats, like JSON, XML, or CSV. It can be run manually in a user interface, or programmatically using the API. See which keywords are driving traffic to a website, which content pages are attracting the most backlinks and what pages users are engaging with, and so on.Ĭheck out these web scraping APIs (+ documentation): ApifyĪpify provides a web scraper API to crawl web pages and extract structured data from them using just a few lines of JavaScript code. Once you’ve pulled all this information together (aka, scraping it together) you can do the bit you can’t automate: analyse it. It can help you to speed up pulling together data like page optimisations, keywords and content from both your competitors and your own/client sites.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed